Brain-Machine Interfaces: The Perception-Action Closed Loop

By J. d. R. Millán

Neural signals, sophisticated processing techniques, sensory feedback, and human conscious control of cognitive states can be combined in a closed control loop to more nearly simulate natural motor control.

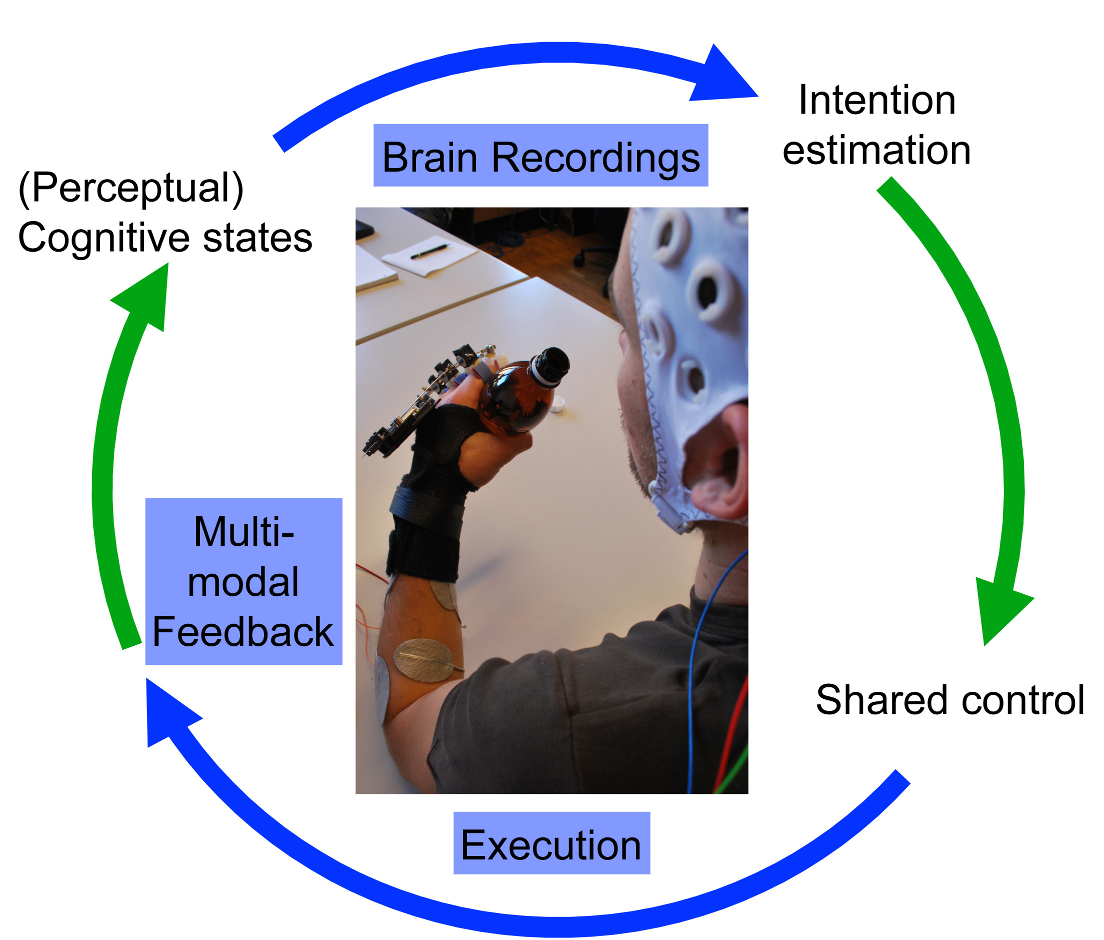

Brain-Machine Interfaces (BMI) is about transforming neural activity into action and sensation into perception (Figure 1). In a BMI system, neural signals recorded from the brain are fed into a decoding algorithm that translates these signals into motor outputs to control a variety of practical devices for motor-disabled people [1-5]. Feedback from the prosthetic device, conveyed to the user either via normal sensory pathways or directly through brain stimulation, establishes a closed control loop.

Figure 1: Brain-machine interface loop. A BMI transforms brain activity (recorded at the micro-, meso- or macro-level) into actions by decoding user’s intention. The estimated intention is enlarged with contextual information (external input plus internal state of the neuroprosthesis) using shared control. Execution of actions conveys rich multimodal feedback to the user, who makes perceptual cognitive decisions that dynamically modulate their goal-directed behavior.

An important aspect of a BMI is the capability to distinguish between different patterns of brain activity, each being associated to a particular intention or mental task. Hence, adaptation is a key component of a BMI, because, on the one side, users must learn to modulate their neural activity so as to generate distinct brain patterns, while, on the other side, machine learning techniques ought to discover the individual brain patterns characterizing the mental tasks executed by the user. In essence, a BMI is a two-learner system that must engage in a mutual adaptation process [6, 7].

Future neuroprosthetics -robots and exoskeletons controlled via a BMI- will be tightly coupled with the user in such a way that the resulting system can replace and restore impaired limb functions because controlled by the same neural signals than their natural counterparts. However, robust and natural interaction of subjects with prostheses over long periods of time remains a major challenge. To tackle this challenge we can get inspiration from natural motor control, where goal-directed behavior is dynamically modulated by perceptual feedback resulting from executed actions.

Brain signals for a BMI can be recorded from single neurons using microelectrode arrays implanted in the brain (single unit activity, SUA) or as the concerted activity of neuronal populations of different sizes depending on the position of the electrodes -either implanted in the brain (local field potential, LFP), on the surface of the brain (electrocorticography, ECoG), or outside the scalp (electroencephalography, EEG). These approaches provide complimentary advantages, and a combination of technologies may be necessary in order to achieve the ultimate goal of controlling neuroprostheses capable to replicate any kind of body movement as easily as able-bodied people control their natural limbs [8].

No matter the origin of the brain signals, current BMIs partly emulate human motor control as they decode cortical correlates of movement parameters -from onset of a movement to directions to instantaneous velocity- in order to generate the sequence of movements for the neuroprosthesis. A closer look, though, shows that motor control results from the combined activity of the cerebral cortex, subcortical areas and spinal cord. In fact, many elements of skilled movements, from manipulation to walking, are mainly handled at the brainstem and spinal cord level with cortical areas providing an abstraction of the desired movement. This hierarchical organization supports the hypothesis that complex behaviours can be controlled using the low-dimensional output of a BMI in conjunction with intelligent devices in charge to perform low-level commands; akin to the role of subcortical and spinal cord levels in human motor control.

Our brain-controlled wheelchair (Figure 2) illustrates the future of intelligent neuroprostheses that, as our spinal cord and musculoskeletal system, work in tandem with motor commands decoded from the user’s brain cortex [9]. Users can drive it reliably and safely over long periods of time thanks to the incorporation of shared control (or context awareness) techniques. This relieves users from the need to deliver continuously all the necessary low-level control parameters and, so, reduces their cognitive workload and facilitates split attention [10].

A further component that will facilitate intuitive and natural control of motor neuroprosthetics is the incorporation of rich multimodal feedback and neural correlates of perceptual processes resulting from this feedback. Realistic sensory feedback must convey artificial tactile and proprioceptive information -i.e. the awareness of the position and movement- of the neuroprosthesis [11]. This type of sensory information has potential to significantly improve the control of the prosthesis by allowing the user to feel the environment in cases in which natural sensory afferents are compromised -either through other senses or by stimulating the body or even the brain directly to recover the lost sensation. Furthermore, rich multimodal feedback is essential to promote user’s agency and owernship of the prosthesis.

Finally, we can decode and integrate in the prosthetic control loop information about perceptual cognitive processes of the user that are crucial for volitional interaction, such as awareness to errors made by the device [12], anticipation of critical decision points, and lapses of attention. As in natural motor control, this information is associated to processing of feedback and should dynamically modulate interaction.

Figure 2: Brain-controlled wheelchair. Users can drive it reliably and safely over long periods of time thanks to the incorporation of shared control (or context awareness) techniques. This wheelchair illustrates the future of intelligent neuroprostheses that, as our spinal cord and musculoskeletal system, works in tandem with motor commands decoded from the user’s brain cortex. This relieves users from the need to deliver continuously all the necessary low-level control parameters and, so, reduces their cognitive workload.

For Further Reading

1. L. Tonin, T. Carlson, R. Leeb, and J. d. R. Millán, “Brain-controlled telepresence robot by motor-disabled people,” in Proc. 31st Annual Intl. Conf. IEEE Eng. Med. Biol. Soc., 2011.

2. L. R. Hochberg, D. Bacher, B. Jarosiewicz, N. Y. Masse, J. D. Simeral, J. Vogel, S. Haddadin, J. Liu, S. S. Cash, P. van der Smagt, and J. P. Donoghue, “Reach and grasp by people with tetraplegia using a neurally controlled robotic arm,” Nature, vol. 485, pp. 372-375, 2012.

3. J. L. Collinger, B. Wodlinger, J. E. Downey, W. Wang, E. C. Tyler-Kabara, D. J. Weber, A. J. C. McMorland, M. Velliste, M.L Boninger, and A. B. Schwartz, “High-performance neuroprosthetic control by an individual with tetraplegia,” The Lancet , vol. 381, pp. 557-564, 2013.

4. R. Leeb, S. Perdikis, L. Tonin, A. Biasiucci, M. Tavella, M. Creatura, A. Molina, A. Al-Khodairy, T. Carlson, and J. d. R. Millán, “Transferring brain-computer interface beyond the laboratory: Successful application control for motor-disabled users,” Artif. Intell. Med., vol. 59, pp. 121-132, 2013.

5. S. Perdikis, R. Leeb, J. Williamson, A. Ramsey, M. Tavella, L. Desideri, E.-J. Hoogerwerf, A. Al-Khodairy, R. Murray-Smith, and J. d. R. Millán, “Clinical evaluation of BrainTree, a motor imagery hybrid BCI speller,” J. Neural Eng., vol. 11, p. 036003, 2014.

6. J. d. R. Millán, P. W. Ferrez, F. Galán, E. Lew, and R. Chavarriaga, “Non-invasive brain-machine interaction,” Int. J. Pattern Recognition Art. Intell., vol. 22, pp. 959-972, 2008.

7. J. M. Carmena, “Advances in neuroprosthetic learning and control,” PLoS Biol., vol.11, p. e1001561, 2013.

8. J. d. R. Millán and J. M. Carmena, “Invasive or noninvasive: Understanding brain-machine interface technology,” IEEE Eng. Med. Biol. Mag., vol. 29, pp.16-22, 2010.

9. T. Carlson and J. d. R. Millán, “Brain-controlled wheelchairs: A robotic architecture,” IEEE Robot. Autom. Mag., vol. 20, pp. 65-73, 2013.

10. M. Tavella, R. Leeb, R. Rupp, and J. d. R. Millán, “Towards natural non-invasive hand neuroprostheses for daily living,” in Proc. 32nd Annual Intl. Conf. IEEE Eng. Med. Biol. Soc., 2010.

11. S. Raspopovic, M. Capogrosso, F. M. Petrini, M. Bonizzato, J. Rigosa, G. Di Pino, J. Carpaneto, M. Controzzi, T. Boretius, E. Fernandez, G. Granata, C. G. Oddo, L. Citi, A. L. Ciancio, C. Cipriani, M. C. Carrozza, W. Jensen, E. Guglielmelli, T. Stieglitz, P. M. Rossini, and S. Micera, “Restoring natural sensory feedback in real-time bidirectional hand prostheses,” Sci. Transl. Med., vol. 6, p. 222ra19, 2014.

12. R. Chavarriaga, A. Sobolewski, and J. d. R. Millán, “Errare machinale est: The use of error-related potentials in brain-machine interfaces,” Front. Neurosci., vol. 8, p. 208, 2014.

Contributor

José del R. Millán the Defitech Professor at the Ecole Polytechnique Fédérale de Lausanne (EPFL) where he explores the use of brain signals for multimodal interaction and, in particular, the development of non-invasive brain-controlled robots and neuroprostheses. In this multidisciplinary research effort, Dr. Millán is bringing together his pioneering work on the two fields of brain-machine interfaces and adaptive intelligent robotics. He received his Ph.D. in computer science from the Univ. Politècnica de Catalunya (Barcelona, Spain) in 1992. Read more

José del R. Millán the Defitech Professor at the Ecole Polytechnique Fédérale de Lausanne (EPFL) where he explores the use of brain signals for multimodal interaction and, in particular, the development of non-invasive brain-controlled robots and neuroprostheses. In this multidisciplinary research effort, Dr. Millán is bringing together his pioneering work on the two fields of brain-machine interfaces and adaptive intelligent robotics. He received his Ph.D. in computer science from the Univ. Politècnica de Catalunya (Barcelona, Spain) in 1992. Read more

Nitish V. Thakor (F'1994) is a Professor of Biomedical Engineering at Johns Hopkins University, Provost Chair Professor at National University of Singapore, and also the Director of the Singapore Institute for Neurotechnology (SINAPSE) at the National University of Singapore. His expertise is in the field of Neurotechnology and Medical Instrumentation.

Nitish V. Thakor (F'1994) is a Professor of Biomedical Engineering at Johns Hopkins University, Provost Chair Professor at National University of Singapore, and also the Director of the Singapore Institute for Neurotechnology (SINAPSE) at the National University of Singapore. His expertise is in the field of Neurotechnology and Medical Instrumentation.  Nigel Lovell is Scientia Professor at the Graduate School of Biomedical Engineering, University of New South Wales (UNSW), Sydney, Australia.

Nigel Lovell is Scientia Professor at the Graduate School of Biomedical Engineering, University of New South Wales (UNSW), Sydney, Australia.  José del R. Millán the Defitech Professor at the Ecole Polytechnique Fédérale de Lausanne (EPFL) where he explores the use of brain signals for multimodal interaction and, in particular, the development of non-invasive brain-controlled robots and neuroprostheses. In this multidisciplinary research effort, Dr. Millán is bringing together his pioneering work on the two fields of brain-machine interfaces and adaptive intelligent robotics. He received his Ph.D. in computer science from the Univ. Politècnica de Catalunya (Barcelona, Spain) in 1992.

José del R. Millán the Defitech Professor at the Ecole Polytechnique Fédérale de Lausanne (EPFL) where he explores the use of brain signals for multimodal interaction and, in particular, the development of non-invasive brain-controlled robots and neuroprostheses. In this multidisciplinary research effort, Dr. Millán is bringing together his pioneering work on the two fields of brain-machine interfaces and adaptive intelligent robotics. He received his Ph.D. in computer science from the Univ. Politècnica de Catalunya (Barcelona, Spain) in 1992.  Dr Cuntai Guan received his Ph.D. degree in Electrical and Electronic Engineering from Southeast University in 1993. He is currently Principal Scientist and Department Head at the Institute for Infocomm Research, Agency for Science, Technology and Research (A*STAR), Singapore. His current research interests include neural and biomedical signal processing; neural and cognitive process and its clinical application; brain-computer interface algorithms, systems and its applications.

Dr Cuntai Guan received his Ph.D. degree in Electrical and Electronic Engineering from Southeast University in 1993. He is currently Principal Scientist and Department Head at the Institute for Infocomm Research, Agency for Science, Technology and Research (A*STAR), Singapore. His current research interests include neural and biomedical signal processing; neural and cognitive process and its clinical application; brain-computer interface algorithms, systems and its applications.  Dr Kai Keng Ang received his Ph.D. degree in computer engineering from Nanyang Technological University, Singapore. He is currently the Head of Brain-Computer Interface Laboratory and a Scientist with the Institute for Infocomm Research, Agency for Science, Technology and Research, Singapore. His current research interests include brain-computer interfaces, computational intelligence, machine learning, pattern recognition, and signal processing.

Dr Kai Keng Ang received his Ph.D. degree in computer engineering from Nanyang Technological University, Singapore. He is currently the Head of Brain-Computer Interface Laboratory and a Scientist with the Institute for Infocomm Research, Agency for Science, Technology and Research, Singapore. His current research interests include brain-computer interfaces, computational intelligence, machine learning, pattern recognition, and signal processing.  Mr. Christopher Kuah is a Principal Occupational Therapist currently holding the post of Allied Health Coordinator at the Centre for Advanced Rehabilitation Therapeutics at Tan Tock Seng Hospital. He received his professional qualification in 1995 and attained the Master of Science in Neurorehabilitation from Brunel University (UK) in 2002. His key neurorehabilitation interests include management of clients with hemiplegia and those with complex neuro-disability as a result of stroke and acquired brain injuries. The current focus of his clinical and research work involves development of clinical programs incorporating rehabilitation technologies encompassing robotics, brain-computer interface, and sensor technologies for post-stroke upper limb recovery.

Mr. Christopher Kuah is a Principal Occupational Therapist currently holding the post of Allied Health Coordinator at the Centre for Advanced Rehabilitation Therapeutics at Tan Tock Seng Hospital. He received his professional qualification in 1995 and attained the Master of Science in Neurorehabilitation from Brunel University (UK) in 2002. His key neurorehabilitation interests include management of clients with hemiplegia and those with complex neuro-disability as a result of stroke and acquired brain injuries. The current focus of his clinical and research work involves development of clinical programs incorporating rehabilitation technologies encompassing robotics, brain-computer interface, and sensor technologies for post-stroke upper limb recovery.  Dr. Effie Chew is Senior Consultant in Rehabilitation Medicine, Division of Neurology, University Medicine Cluster, National University Hospital (NUH), Singapore. She received her MBBS from the University of Melbourne, Australia and her Membership to the Royal College of Physicians (Edinburgh, UK). She completed her Advanced Specialist Training in Rehabilitation Medicine in Singapore and went on to complete a Fellowship in Clinical Neurorehabilitation at Spaulding Rehabilitation Hospital, Harvard Medical School, where she worked in the areas of noninvasive brain stimulation for neuromodulation and neurorecovery, as well as neuropharmacology for cognitive recovery in traumatic brain injury and robotics and motor learning in recovery post-stroke. These continue to be her current areas of research interests.

Dr. Effie Chew is Senior Consultant in Rehabilitation Medicine, Division of Neurology, University Medicine Cluster, National University Hospital (NUH), Singapore. She received her MBBS from the University of Melbourne, Australia and her Membership to the Royal College of Physicians (Edinburgh, UK). She completed her Advanced Specialist Training in Rehabilitation Medicine in Singapore and went on to complete a Fellowship in Clinical Neurorehabilitation at Spaulding Rehabilitation Hospital, Harvard Medical School, where she worked in the areas of noninvasive brain stimulation for neuromodulation and neurorecovery, as well as neuropharmacology for cognitive recovery in traumatic brain injury and robotics and motor learning in recovery post-stroke. These continue to be her current areas of research interests.  Dr Karen Sui Geok Chua, MBBS (Singapore), FRCP (Edin), FAMS, is currently senior consultant rehabilitation physician practicing at the Tan Tock Seng Hospital Rehabilitation Centre, Singapore. Her special interests include Neurorehabilitation, Spasticity management, including Botulinum toxin therapy, neurolytic blocks and rehabilitation robotics and technology translation.

Dr Karen Sui Geok Chua, MBBS (Singapore), FRCP (Edin), FAMS, is currently senior consultant rehabilitation physician practicing at the Tan Tock Seng Hospital Rehabilitation Centre, Singapore. Her special interests include Neurorehabilitation, Spasticity management, including Botulinum toxin therapy, neurolytic blocks and rehabilitation robotics and technology translation.